Ever since it launched its Pixel phones, Google has always taken the time to brag about the photography solutions adopted on them. For the Pixel 7 Pro, he decided to deepen the Super Res Zoom with a specific post on his blog.

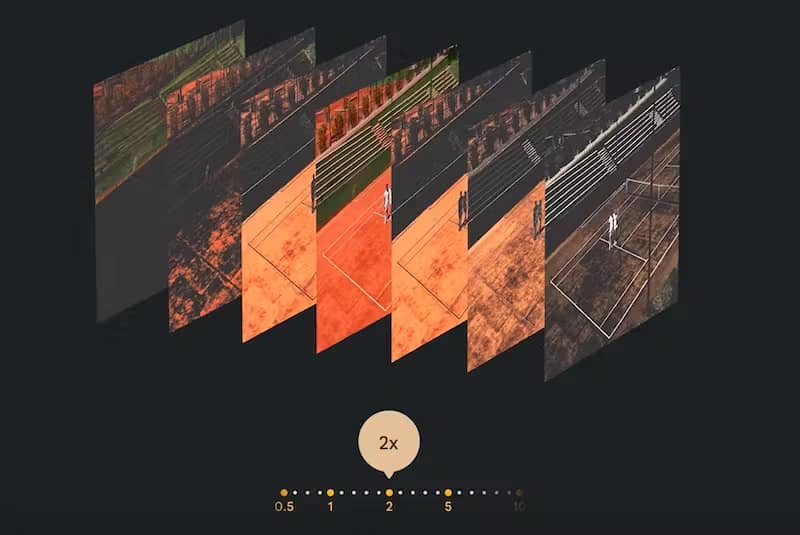

The Super Res Zoom has existed on Google phones since the Pixel 3 and takes full advantage of computational photography linked to photo stacking, that is, a shot that is made up of several shots of the same scene which however remain invisible to the user. because they flow into the final image as a result.

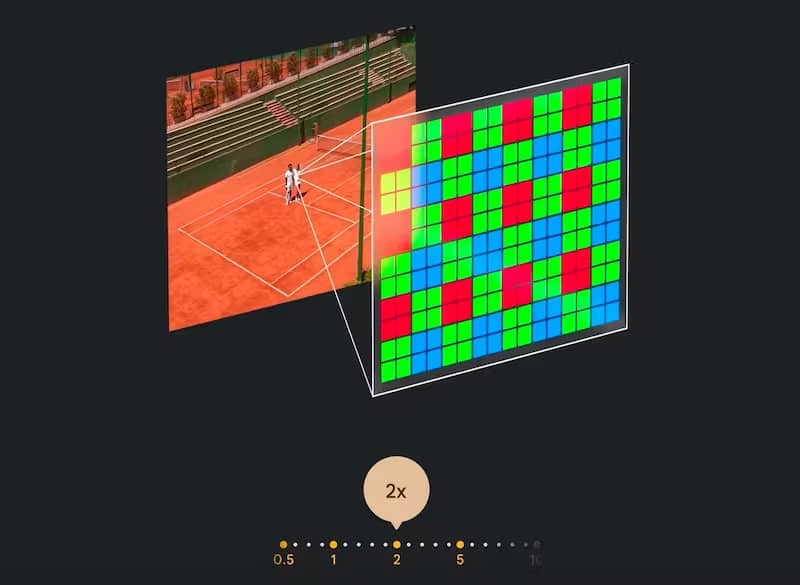

One of the pioneers of this technique was Marc Levoy (in Adobe since 2020), who was already on the Nexus 5 and Nexus 6, in 2014, he brought HDR+ to Google phones with which he improved the results of the photos both in of low light and high dynamic range thanks to a series of shots with short exposure times, aligning them and replacing each pixel with the average color in that position in all shots. Averaging multiple shots helps reduce noise, and using short exposures reduces blur.

Levoy also brought to life the Portrait mode in the Pixel 2, and the Night Photo in the Pixel Camera app in 2018, through which he effectively legitimized astrophotography on smartphones. Levoy no longer works at Google, but the Pixel research group has continued on the path traced by Levoy by giving the Pixel 7 Pro a different Super Res Zoom than usual, also thanks to the tele camera that “is there even when it is not used”.

Two cameras working as one

The Pixel 7 Pro has three dedicated rear cameras: a 12MP ultra-wide angle, a 50MP quad bayer wide angle with a 24mm equivalent focal length, and a 48MP quad bayer tele with a 120mm focal length, so a 5x optics of the main camera lens (24mm x 5 = 120mm).

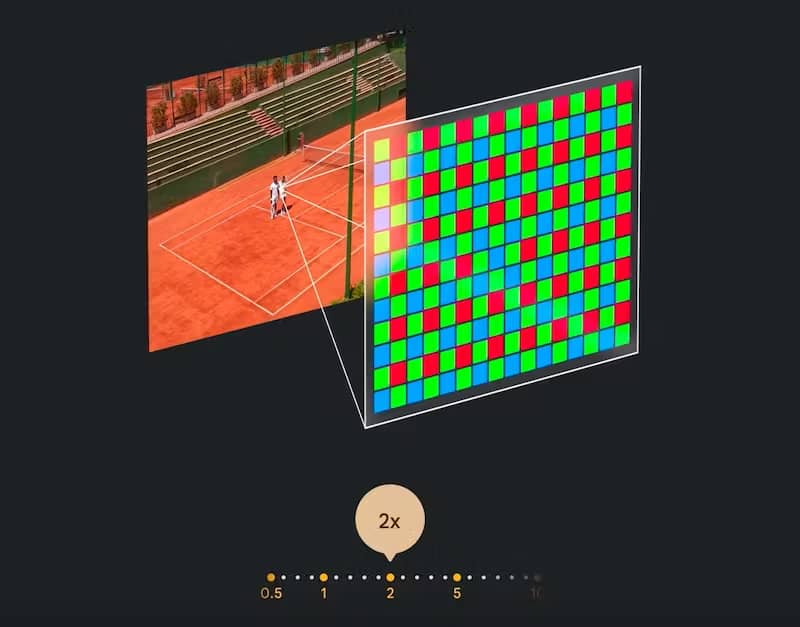

The Super Res Zoom comes into play starting from the 2x zoom, which is done digitally starting from the 50 MP and 25 mm main camera.

For a 2x zoom the Pixel 7 Pro (but also the Pixel 7) behaves in a rather common way for a Pixel, and crops the main sensor applies HDR with Bracketing – i.e. with different exposures for all “ghost images” taken – and mosaic using a traditional Bayer filter in software to get a 12.5MP photo.

The main novelty comes with the presence of telephoto lenses in the recent Pixels, although Google has only mentioned the Pixel 7 Pro and not the Pixel 6 Pro to explain it.

In all digital magnifications between 2x and 5x optical, to shoot the final image the Super Res Zoom combines the shots of the main camera and the tele camera.

At this point, a new Machine Learning model of the Tensor G2 processor aligns and blends these two shots to create the sharpest image possible.

Going beyond the 5x optical zoom and arriving at 10x, the Pixel 7 Pro applies the same procedure carried out for the 2x digital zoom from the main camera, only this time the sensor and the optics of the tele camera take the image.

Starting from 15x, Zoom Stabilization also comes into play, to prevent the magnification from causing vibrations in the image, and from 20x, another Machine Learning model of the Tensor G2 is used to keep the details intact. The Pixel 7 Pro is also the first of Google’s phones to go 30x zoom.